二进制方式部署k8s集群(详细讲解)

文章目录一、Kubernetes集群部署架构规划二、准备环境三、 部署Etcd集群1、生成证书2、安装etcd3、部署Flannel网络*四、在Master节点部署组件1、生成证书2、部署apiserver组件---在master节点进行3、部署schduler组件---master节点4、部署controller-manager组件--控制管理组件五、在Node节点部署组件1、部署kubelet

文章目录

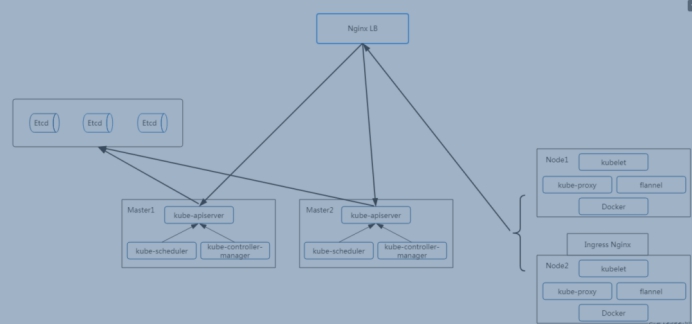

一、Kubernetes集群部署架构规划

目标任务:

1、Kubernetes集群部署架构规划

2、部署Etcd集群

3、在Node节点安装Docker

4、部署Flannel网络插件

5、在Master节点部署组件

6、在Node节点部署组件

7、查看集群状态

8、运行一个测试示例

9、部署Dashboard(Web UI)

操作系统:

CentOS7.4_x64

软件版本:

Docker 19.09.0-ce

Kubernetes 1.1

服务器角色、IP、组件:

k8s-master1

192.168.246.162 kube-apiserver,kube-controller-manager,kube-scheduler,etcd

k8s-master2

192.168.246.163 kube-apiserver,kube-controller-manager,kube-scheduler,etcd

k8s-node1

192.168.246.164 kubelet,kube-proxy,docker,flannel,etcd

k8s-node2

192.168.246.165 kubelet,kube-proxy,docker,flannel

Master负载均衡

192.168.246.166 LVS

镜像仓库

10.206.240.188 Harbor

机器配置要求:

2G

主机名称 必须改 必须相互解析

[root@k8s-master1 ~]# vim /etc/hosts

192.168.246.162 k8s-master1

192.168.246.163 k8s-master2

192.168.246.164 k8s-node1

192.168.246.165 k8s-node2

192.168.246.166 lvs-server

关闭防火墙和selinux

负载均衡器:

云环境:

可以采用slb

非云环境:

主流的软件负载均衡器,例如LVS、HAProxy、Nginx

这里采用Nginx作为apiserver负载均衡器,架构图如下:

2.安装nginx使用stream模块作4层反向代理配置如下:

user nginx;

worker_processes 4;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.246.162:6443;

server 192.168.246.163:6443;

}

server {

listen 6443;

proxy_pass k8s-apiserver;

}

}

二、准备环境

三台机器,所有机器相互做解析 centos7.4

关闭防火墙和selinux

[root@k8s-master ~]# vim /etc/hosts

192.168.96.134 k8s-master

192.168.96.135 k8s-node1

192.168.96.136 k8s-node2

三、 部署Etcd集群

1、生成证书

使用cfssl来生成自签证书,任何机器都行,证书这块儿知道怎么生成、怎么用即可,暂且不用过多研究(这个证书随便在那台机器生成都可以。哪里用将证书拷贝到哪里就可以了。)

下载cfssl工具:下载的这些是可执行的二进制命令直接用就可以了

[root@k8s-master1 ~]# wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

[root@k8s-master1 ~]# wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

[root@k8s-master1 ~]# wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

[root@k8s-master1 ~]# chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64

[root@k8s-master1 ~]# mv cfssl_linux-amd64 /usr/local/bin/cfssl

[root@k8s-master1 ~]# mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

[root@k8s-master1 ~]# mv cfssl-certinfo_linux-amd64 /usr/bin/cfssl-certinfo

生成Etcd证书:

创建以下三个文件:

[root@k8s-master1 ~]# mkdir cert

[root@k8s-master1 ~]# cd cert/

[root@k8s-master1 cert]# vim ca-config.json #生成ca中心的

[root@k8s-master1 cert]# cat ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

[root@k8s-master1 cert]# vim ca-csr.json #生成ca中心的证书请求文件

[root@k8s-master1 cert]# cat ca-csr.json

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

[root@k8s-master1 cert]# vim server-csr.json #生成服务器的证书请求文件

[root@k8s-master1 cert]# cat server-csr.json

{

"CN": "etcd",

"hosts": [

"192.168.246.162",

"192.168.246.163",

"192.168.246.164"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

生成证书:

[root@k8s-master1 cert]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

[root@k8s-master1 cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

[root@k8s-master1 cert]# ls *pem

ca-key.pem ca.pem server-key.pem server.pem

2、安装etcd

二进制包下载地址:https://github.com/coreos/etcd/releases/tag/v3.2.12

以下部署步骤在规划的三个etcd节点操作一样,唯一不同的是etcd配置文件中的服务器IP要写当前的:

解压二进制包:

以下步骤三台机器都操作:

# wget https://github.com/etcd-io/etcd/releases/download/v3.2.12/etcd-v3.2.12-linux-amd64.tar.gz

# mkdir /opt/etcd/{bin,cfg,ssl} -p

# tar zxvf etcd-v3.2.12-linux-amd64.tar.gz

# mv etcd-v3.2.12-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/

创建etcd配置文件:

# cd /opt/etcd/cfg/

# vim etcd

# cat /opt/etcd/cfg/etcd

#[Member]

ETCD_NAME="etcd01" #节点名称,各个节点不能相同

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.246.162:2380" #写每个节点的ip

ETCD_LISTEN_CLIENT_URLS="https://192.168.246.162:2379" #写每个节点的ip

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.246.162:2380" #写每个节点的ip

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.246.162:2379" #写每个节点的ip

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.246.162:2380,etcd02=https://192.168.246.164:2380,etcd03=https://192.168.246.165:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

参数解释:

* ETCD_NAME 节点名称,每个节点名称不一样

* ETCD_DATA_DIR 存储数据目录(他是一个数据库,不是存在内存的,存在硬盘中的,所有和k8s有关的信息都会存到etcd里面的)

* ETCD_LISTEN_PEER_URLS 集群通信监听地址

* ETCD_LISTEN_CLIENT_URLS 客户端访问监听地址

* ETCD_INITIAL_ADVERTISE_PEER_URLS 集群通告地址

* ETCD_ADVERTISE_CLIENT_URLS 客户端通告地址

* ETCD_INITIAL_CLUSTER 集群节点地址

* ETCD_INITIAL_CLUSTER_TOKEN 集群Token

* ETCD_INITIAL_CLUSTER_STATE 加入集群的当前状态,new是新集群,existing表示加入已有集群

systemd管理etcd:

# vim /usr/lib/systemd/system/etcd.service

# cat /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/opt/etcd/cfg/etcd

ExecStart=/opt/etcd/bin/etcd \

--name=${ETCD_NAME} \

--data-dir=${ETCD_DATA_DIR} \

--listen-peer-urls=${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=/opt/etcd/ssl/server.pem \

--key-file=/opt/etcd/ssl/server-key.pem \

--peer-cert-file=/opt/etcd/ssl/server.pem \

--peer-key-file=/opt/etcd/ssl/server-key.pem \

--trusted-ca-file=/opt/etcd/ssl/ca.pem \

--peer-trusted-ca-file=/opt/etcd/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

把刚才生成的证书拷贝到配置文件中的位置:(将master上面生成的证书scp到剩余两台机器上面)

# cd /root/cert/

# cp ca*pem server*pem /opt/etcd/ssl

直接拷贝到剩余两台etcd机器:

[root@k8s-master cert]# scp ca*pem server*pem k8s-node1:/opt/etcd/ssl

[root@k8s-master cert]# scp ca*pem server*pem k8s-node2:/opt/etcd/ssl

全部启动并设置开启启动:

# systemctl daemon-reload

# systemctl start etcd

# systemctl enable etcd

都部署完成后,三台机器都检查etcd集群状态:

# /opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.246.162:2379,https://192.168.246.164:2379,https://192.168.246.165:2379" cluster-health

member 18218cfabd4e0dea is healthy: got healthy result from https://10.206.240.111:2379

member 541c1c40994c939b is healthy: got healthy result from https://10.206.240.189:2379

member a342ea2798d20705 is healthy: got healthy result from https://10.206.240.188:2379

cluster is healthy

如果输出上面信息,就说明集群部署成功。

如果有问题第一步先看日志:/var/log/messages 或 journalctl -u etcd

报错:

Jan 15 12:06:55 k8s-master1 etcd: request cluster ID mismatch (got 99f4702593c94f98 want cdf818194e3a8c32)

解决:因为集群搭建过程,单独启动过单一etcd,做为测试验证,集群内第一次启动其他etcd服务时候,是通过发现服务引导的,所以需要删除旧的成员信息,所有节点作以下操作

[root@k8s-master1 default.etcd]# pwd

/var/lib/etcd/default.etcd

[root@k8s-master1 default.etcd]# rm -rf member/

========================================================

在Node节点安装Docker

# yum remove docker \

docker-client \

docker-client-latest \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-selinux \

docker-engine-selinux \

docker-engine

# yum install -y yum-utils device-mapper-persistent-data lvm2 git

# yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# yum install docker-ce -y

启动设置开机自启

# curl -sSL https://get.daocloud.io/daotools/set_mirror.sh | sh -s http://bc437cce.m.daocloud.io #配置加速器

3、部署Flannel网络*

Flannel要用etcd存储自身一个子网信息,所以要保证能成功连接Etcd,写入预定义子网段:

在node节点部署,如果没有在master部署应用,那就不要在master部署flannel,他是用来给所有的容器用来通信的。

[root@k8s-master ~]# scp -r cert/ k8s-node1:/root/ #将生成的证书copy到剩下的机器上面

[root@k8s-master ~]# scp -r cert/ k8s-node2:/root/

[root@k8s-master ~]# cd cert/

/opt/etcd/bin/etcdctl \

--ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem \

--endpoints="https://192.168.246.162:2379,https://192.168.246.164:2379,https://192.168.246.165:2379" \

set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

=========================================================================================

#注:以下部署步骤在规划的每个node节点都操作。

下载二进制包:

# wget https://github.com/coreos/flannel/releases/download/v0.10.0/flannel-v0.10.0-linux-amd64.tar.gz

# tar zxvf flannel-v0.10.0-linux-amd64.tar.gz

# mkdir -pv /opt/kubernetes/bin

# mv flanneld mk-docker-opts.sh /opt/kubernetes/bin

配置Flannel:

# mkdir -pv /opt/kubernetes/cfg/

# vim /opt/kubernetes/cfg/flanneld

# cat /opt/kubernetes/cfg/flanneld

FLANNEL_OPTIONS="--etcd-endpoints=https://192.168.246.162:2379,https://192.168.246.164:2379,https://192.168.246.165:2379 -etcd-cafile=/opt/etcd/ssl/ca.pem -etcd-certfile=/opt/etcd/ssl/server.pem -etcd-keyfile=/opt/etcd/ssl/server-key.pem"

systemd管理Flannel:

# vim /usr/lib/systemd/system/flanneld.service

# cat /usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq $FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

配置Docker启动指定子网段:可以将源文件直接覆盖掉

# vim /usr/lib/systemd/system/docker.service

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

从master节点拷贝证书文件到node1和node2上:因为node1和2上没有证书,但是flanel需要证书

# mkdir -pv /opt/etcd/ssl/

# scp /opt/etcd/ssl/* k8s-node1:/opt/etcd/ssl/

重启flannel和docker:

# systemctl daemon-reload

# systemctl start flanneld

# systemctl enable flanneld

# systemctl daemon-reload

# systemctl restart docker

注意:如果flannel启动不了请检查设置ip网段是否正确

检查是否生效:

[root@k8s-node1 ~]# ps -ef | grep docker

root 3632 1 1 22:19 ? 00:00:00 /usr/bin/dockerd --bip=172.17.77.1/24 --ip-masq=false --mtu=1450

# ip addr

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN

link/ether 02:42:cd:f6:c9:cc brd ff:ff:ff:ff:ff:ff

inet 172.17.77.1/24 brd 172.17.77.255 scope global docker0

valid_lft forever preferred_lft forever

4: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UNKNOWN

link/ether ba:96:dc:cc:25:e0 brd ff:ff:ff:ff:ff:ff

inet 172.17.77.0/32 scope global flannel.1

valid_lft forever preferred_lft forever

inet6 fe80::b896:dcff:fecc:25e0/64 scope link

valid_lft forever preferred_lft forever

注:

1. 确保docker0与flannel.1在同一网段。

2. 测试不同节点互通,在当前节点访问另一个Node节点docker0 IP:案例:node1机器pingnode2机器的docker0上面的ip地址

[root@k8s-node1 ~]# ping 172.17.33.1

PING 172.17.33.1 (172.17.33.1) 56(84) bytes of data.

64 bytes from 172.17.33.1: icmp_seq=1 ttl=64 time=0.520 ms

64 bytes from 172.17.33.1: icmp_seq=2 ttl=64 time=0.972 ms

64 bytes from 172.17.33.1: icmp_seq=3 ttl=64 time=0.642 ms

如果能通说明Flannel部署成功。如果不通检查下日志:journalctl -u flannel(快照吧!!!)

四、在Master节点部署组件

在部署Kubernetes之前一定要确保etcd、flannel、docker是正常工作的,否则先解决问题再继续。

1、生成证书

master节点操作--给api-server创建的证书。别的服务访问api-server的时候需要通过证书认证

创建CA证书:

[root@k8s-master1 ~]# mkdir -p /opt/crt/

[root@k8s-master1 ~]# cd /opt/crt/

# vim ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

# vim ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

[root@k8s-master1 crt]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

生成apiserver证书:

[root@k8s-master1 crt]# vim server-csr.json

# cat server-csr.json

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1", //这是后面dns要使用的虚拟网络的网关,不用改,就用这个切忌

"127.0.0.1",

"192.168.246.162", // master的IP地址。

"192.168.246.164",

"192.168.246.165",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

[root@k8s-master1 crt]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

生成kube-proxy证书:

[root@k8s-master1 crt]# vim kube-proxy-csr.json

# cat kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

[root@k8s-master1 crt]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

最终生成以下证书文件:

[root@k8s-master1 crt]# ls *pem

ca-key.pem ca.pem kube-proxy-key.pem kube-proxy.pem server-key.pem server.pem

2、部署apiserver组件—在master节点进行

下载二进制包:https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG-1.11.md

下载这个包(kubernetes-server-linux-amd64.tar.gz)就够了,包含了所需的所有组件。

# wget https://dl.k8s.io/v1.11.10/kubernetes-server-linux-amd64.tar.gz

# mkdir /opt/kubernetes/{bin,cfg,ssl} -pv

# tar zxvf kubernetes-server-linux-amd64.tar.gz

# cd kubernetes/server/bin

# cp kube-apiserver kube-scheduler kube-controller-manager kubectl /opt/kubernetes/bin

从生成证书的机器拷贝证书到master1,master2:----由于证书在master1上面生成的,因此这一步不用scp。

# scp server.pem server-key.pem ca.pem ca-key.pem k8s-master1:/opt/kubernetes/ssl/

# scp server.pem server-key.pem ca.pem ca-key.pem k8s-master2:/opt/kubernetes/ssl/

如下操作:

[root@k8s-master1 bin]# cd /opt/crt/

# cp server.pem server-key.pem ca.pem ca-key.pem /opt/kubernetes/ssl/

创建token文件:

[root@k8s-master1 crt]# cd /opt/kubernetes/cfg/

# vim token.csv

# cat /opt/kubernetes/cfg/token.csv

674c457d4dcf2eefe4920d7dbb6b0ddc,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

第一列:随机字符串,自己可生成

第二列:用户名

第三列:UID

第四列:用户组

创建apiserver配置文件:

[root@k8s-master1 cfg]# pwd

/opt/kubernetes/cfg

[root@k8s-master1 cfg]# vim kube-apiserver

[root@k8s-master1 cfg]# cat kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=true \

--v=4 \

--etcd-servers=https://192.168.246.162:2379,https://192.168.246.164:2379,https://192.168.246.165:2379 \

--bind-address=192.168.246.162 \ #master的ip地址,就是安装api-server的机器地址

--secure-port=6443 \

--advertise-address=192.168.246.162 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \ #这里就用这个网段切记不要修改

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

配置好前面生成的证书,确保能连接etcd。

参数说明:

* --logtostderr 启用日志

* --v 日志等级

* --etcd-servers etcd集群地址

* --bind-address 监听地址

* --secure-port https安全端口

* --advertise-address 集群通告地址

* --allow-privileged 启用授权

* --service-cluster-ip-range Service虚拟IP地址段

* --enable-admission-plugins 准入控制模块

* --authorization-mode 认证授权,启用RBAC授权和节点自管理

* --enable-bootstrap-token-auth 启用TLS bootstrap功能,后面会讲到

* --token-auth-file token文件

* --service-node-port-range Service Node类型默认分配端口范围

systemd管理apiserver:

[root@k8s-master1 cfg]# cd /usr/lib/systemd/system

# vim kube-apiserver.service

# cat /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver

ExecStart=/opt/kubernetes/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

启动:

# systemctl daemon-reload

# systemctl enable kube-apiserver

# systemctl start kube-apiserver

# systemctl status kube-apiserver

3、部署schduler组件—master节点

创建schduler配置文件:

[root@k8s-master1 cfg]# vim /opt/kubernetes/cfg/kube-scheduler

# cat /opt/kubernetes/cfg/kube-scheduler

KUBE_SCHEDULER_OPTS="--logtostderr=true \

--v=4 \

--master=127.0.0.1:8080 \

--leader-elect"

参数说明:

* --master 连接本地apiserver

* --leader-elect 当该组件启动多个时,自动选举(HA)

systemd管理schduler组件:

[root@k8s-master1 cfg]# cd /usr/lib/systemd/system/

# vim kube-scheduler.service

# cat /usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-scheduler

ExecStart=/opt/kubernetes/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

启动:

# systemctl daemon-reload

# systemctl enable kube-scheduler

# systemctl start kube-scheduler

4、部署controller-manager组件–控制管理组件

master节点操作:创建controller-manager配置文件:

[root@k8s-master1 ~]# cd /opt/kubernetes/cfg/

[root@k8s-master1 cfg]# vim kube-controller-manager

# cat /opt/kubernetes/cfg/kube-controller-manager

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \

--v=4 \

--master=127.0.0.1:8080 \

--leader-elect=true \

--address=127.0.0.1 \

--service-cluster-ip-range=10.0.0.0/24 \ //这是后面dns要使用的虚拟网络,不用改,就用这个 切忌

--cluster-name=kubernetes \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem"

systemd管理controller-manager组件:

[root@k8s-master1 cfg]# cd /usr/lib/systemd/system/

[root@k8s-master1 system]# vim kube-controller-manager.service

# cat /usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager

ExecStart=/opt/kubernetes/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

启动:

# systemctl daemon-reload

# systemctl enable kube-controller-manager

# systemctl start kube-controller-manager

所有组件都已经启动成功,通过kubectl工具查看当前集群组件状态:

[root@k8s-master1 ~]# /opt/kubernetes/bin/kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-2 Healthy {"health": "true"}

etcd-0 Healthy {"health": "true"}

etcd-1 Healthy {"health": "true"}

如上输出说明组件都正常。

配置Master负载均衡

所谓的Master HA,其实就是APIServer的HA,Master的其他组件controller-manager、scheduler都是可以通过etcd做选举(--leader-elect),而APIServer设计的就是可扩展性,所以做到APIServer很容易,只要前面加一个负载均衡轮询转发请求即可。

在私有云平台添加一个内网四层LB,不对外提供服务,只做apiserver负载均衡,配置如下:

其他公有云LB配置大同小异,只要理解了数据流程就好配置了。

五、在Node节点部署组件

Master apiserver启用TLS认证后,Node节点kubelet组件想要加入集群,必须使用CA签发的有效证书才能与apiserver通信,当Node节点很多时,签署证书是一件很繁琐的事情,因此有了TLS Bootstrapping机制,kubelet会以一个低权限用户自动向apiserver申请证书,kubelet的证书由apiserver动态签署。

认证大致工作流程如图所示:

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-3IMWREZj-1634039558712)(assets/1571104806135.png)]](https://img-blog.csdnimg.cn/67b1682845ab4cf0a22cc15814eca7aa.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5ZK46JuL6buE5rS-,size_20,color_FFFFFF,t_70,g_se,x_16)

----------------------下面这些操作在master节点完成:---------------------------

将kubelet-bootstrap用户绑定到系统集群角色

[root@k8s-master1 ~]# /opt/kubernetes/bin/kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created

创建kubeconfig文件:

在生成kubernetes证书的目录下执行以下命令生成kubeconfig文件:

[root@k8s-master1 ~]# cd /opt/crt/

指定apiserver 内网负载均衡地址

[root@k8s-master1 crt]# KUBE_APISERVER="https://192.168.246.162:6443" #写你master的ip地址,集群中就写负载均衡的ip地址

[root@k8s-master1 crt]# BOOTSTRAP_TOKEN=674c457d4dcf2eefe4920d7dbb6b0ddc

# 设置集群参数

[root@k8s-master1 crt]# /opt/kubernetes/bin/kubectl config set-cluster kubernetes \

--certificate-authority=ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

# 设置客户端认证参数

[root@k8s-master crt]# /opt/kubernetes/bin/kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=bootstrap.kubeconfig

# 设置上下文参数

[root@k8s-master crt]# /opt/kubernetes/bin/kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

# 设置默认上下文

[root@k8s-master crt]# /opt/kubernetes/bin/kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

#====================================================================================

# 创建kube-proxy kubeconfig文件

[root@k8s-master1 crt]# /opt/kubernetes/bin/kubectl config set-cluster kubernetes \

--certificate-authority=ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

[root@k8s-master1 crt]# /opt/kubernetes/bin/kubectl config set-credentials kube-proxy \

--client-certificate=kube-proxy.pem \

--client-key=kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

[root@k8s-master1 crt]# /opt/kubernetes/bin/kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

[root@k8s-master1 crt]# /opt/kubernetes/bin/kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

[root@k8s-master1 crt]# ls

bootstrap.kubeconfig kube-proxy.kubeconfig

#必看:将这两个文件拷贝到Node节点/opt/kubernetes/cfg目录下。

[root@k8s-master1 crt]# scp *.kubeconfig k8s-node1:/opt/kubernetes/cfg/

[root@k8s-master1 crt]# scp *.kubeconfig k8s-node2:/opt/kubernetes/cfg/

1、部署kubelet组件

----------------------下面这些操作在node节点完成:---------------------------

部署kubelet组件

#将前面下载的二进制包中的kubelet和kube-proxy拷贝到/opt/kubernetes/bin目录下。

将master上面的包拷贝过去

[root@k8s-master1 ~]# scp kubernetes-server-linux-amd64.tar.gz k8s-node1:/root/

[root@k8s-master1 ~]# scp kubernetes-server-linux-amd64.tar.gz k8s-node2:/root/

[root@k8s-node1 ~]# tar xzf kubernetes-server-linux-amd64.tar.gz

[root@k8s-node1 ~]# cd kubernetes/server/bin/

[root@k8s-node1 bin]# cp kubelet kube-proxy /opt/kubernetes/bin/

#=====================================================================================

在两个node节点创建kubelet配置文件:

[root@k8s-node1 ~]# vim /opt/kubernetes/cfg/kubelet

KUBELET_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=192.168.246.164 \ #每个节点自己的ip地址

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--config=/opt/kubernetes/cfg/kubelet.config \

--cert-dir=/opt/kubernetes/ssl \

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0" #这个镜像需要提前下载

[root@k8s-node1 ~]# docker pull registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0

[root@k8s-node2 ~]# docker pull registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0

参数说明:

* --hostname-override 在集群中显示的主机名

* --kubeconfig 指定kubeconfig文件位置,会自动生成

* --bootstrap-kubeconfig 指定刚才生成的bootstrap.kubeconfig文件

* --cert-dir 颁发证书存放位置

* --pod-infra-container-image 管理Pod网络的镜像

其中/opt/kubernetes/cfg/kubelet.config配置文件如下:

[root@k8s-node1 ~]# vim /opt/kubernetes/cfg/kubelet.config

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 192.168.246.164 #写你机器的ip地址

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS: ["10.0.0.2"] #不要改,就是这个ip地址

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

webhook:

enabled: false

systemd管理kubelet组件:

# vim /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet

ExecStart=/opt/kubernetes/bin/kubelet $KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

启动:

# systemctl daemon-reload

# systemctl enable kubelet

# systemctl start kubelet

[root@k8s-master ~]# /opt/kubernetes/bin/kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-F5AQ8SeoyloVrjPuzSbzJnFKQaUsier7EGvNFXLKTqM 17s kubelet-bootstrap Pending

node-csr-bjeHSWXOuUDSHganJPL_hDz_8jjYhM2FQyTkbA9pM0Q 18s kubelet-bootstrap Pending

在Master审批Node加入集群:

启动后还没加入到集群中,需要手动允许该节点才可以。在Master节点查看请求签名的Node:

[root@k8s-master1 ~]# /opt/kubernetes/bin/kubectl certificate approve XXXXID

注意:xxxid 指的是上面的NAME这一列

[root@k8s-master1 ~]# /opt/kubernetes/bin/kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr--1TVDzcozo7NoOD3WS2t9xLQqNunsVXj_i2AQ5x1mbs 1m kubelet-bootstrap Approved,Issued

node-csr-L0wqvr69oy8rzXwFm1u1uNx4aEMOOvd_RWPxaAERn_w 27m kubelet-bootstrap Approved,Issued

查看集群节点信息:

[root@k8s-master1 ~]# /opt/kubernetes/bin/kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.246.164 Ready <none> 1m v1.11.10

192.168.246.165 Ready <none> 17s v1.11.10

2、部署kube-proxy组件

创建kube-proxy配置文件:还是在所有node节点

[root@k8s-node1 ~]# vim /opt/kubernetes/cfg/kube-proxy

# cat /opt/kubernetes/cfg/kube-proxy

KUBE_PROXY_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=192.168.246.164 \ #写每个node节点ip

--cluster-cidr=10.0.0.0/24 \ //不要改,就是这个ip

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"

systemd管理kube-proxy组件:

[root@k8s-node1 ~]# cd /usr/lib/systemd/system

# cat /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy $KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

启动:

# systemctl daemon-reload

# systemctl enable kube-proxy

# systemctl start kube-proxy

在master查看集群状态

[root@k8s-master1 ~]# /opt/kubernetes/bin/kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.246.164 Ready <none> 19m v1.11.10

192.168.246.165 Ready <none> 18m v1.11.10

查看集群状态

[root@k8s-master1 ~]# /opt/kubernetes/bin/kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health": "true"}

etcd-1 Healthy {"health": "true"}

etcd-2 Healthy {"health": "true"}

=====================================================================================

六、部署Dashboard(Web UI)

* dashboard-deployment.yaml #部署Pod,提供Web服务

* dashboard-rbac.yaml #授权访问apiserver获取信息

* dashboard-service.yaml #发布服务,提供对外访问

创建一个目录

[root@k8s-master ~]# mkdir webui

[root@k8s-master ~]# cd webui/

[root@k8s-master webui]# cat dashboard-deployment.yaml

apiVersion: apps/v1beta2

kind: Deployment

metadata:

name: kubernetes-dashboard

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ''

spec:

serviceAccountName: kubernetes-dashboard

containers:

- name: kubernetes-dashboard

image: registry.cn-hangzhou.aliyuncs.com/kube_containers/kubernetes-dashboard-amd64:v1.8.1

resources:

limits:

cpu: 100m

memory: 300Mi

requests:

cpu: 100m

memory: 100Mi

ports:

- containerPort: 9090

protocol: TCP

livenessProbe:

httpGet:

scheme: HTTP

path: /

port: 9090

initialDelaySeconds: 30

timeoutSeconds: 30

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

[root@k8s-master webui]# cat dashboard-rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

addonmanager.kubernetes.io/mode: Reconcile

name: kubernetes-dashboard

namespace: kube-system

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: kubernetes-dashboard-minimal

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

addonmanager.kubernetes.io/mode: Reconcile

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kube-system

[root@k8s-master webui]# cat dashboard-service.yaml

apiVersion: v1

kind: Service

metadata:

name: kubernetes-dashboard

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

type: NodePort

selector:

k8s-app: kubernetes-dashboard

ports:

- port: 80

targetPort: 9090

[root@k8s-master webui]# /opt/kubernetes/bin/kubectl create -f dashboard-rbac.yaml

[root@k8s-master webui]# /opt/kubernetes/bin/kubectl create -f dashboard-deployment.yaml

[root@k8s-master webui]# /opt/kubernetes/bin/kubectl create -f dashboard-service.yaml

等待数分钟,查看资源状态:

查看名称空间:

[root@k8s-master webui]# /opt/kubernetes/bin/kubectl get all -n kube-system

NAME READY STATUS RESTARTS AGE

pod/kubernetes-dashboard-d9545b947-442ft 1/1 Running 0 21m

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes-dashboard NodePort 10.0.0.143 <none> 80:47520/TCP 21m

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE

deployment.apps/kubernetes-dashboard 1 1 1 1 21m

NAME DESIRED CURRENT READY AGE

replicaset.apps/kubernetes-dashboard-d9545b947 1 1 1 21m

查看访问端口:

查看指定命名空间的服务

[root@k8s-master webui]# /opt/kubernetes/bin/kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes-dashboard NodePort 10.0.0.143 <none> 80:47520/TCP 22m

访问node节点的ip

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-bWAsfLxc-1634039164089)(assets/1584718586575.png)]

测试

==========================================================

运行一个测试示例--在master节点先安装docker服务

创建一个Nginx Web,判断集群是否正常工

# /opt/kubernetes/bin/kubectl run nginx --image=daocloud.io/nginx --replicas=3

# /opt/kubernetes/bin/kubectl expose deployment nginx --port=88 --target-port=80 --type=NodePort

# /opt/kub.../bin/kubectl delete -f deployment --all

在master上面查看:

查看Pod,Service:

# /opt/kubernetes/bin/kubectl get pods #需要等一会

NAME READY STATUS RESTARTS AGE

nginx-64f497f8fd-fjgt2 1/1 Running 3 28d

nginx-64f497f8fd-gmstq 1/1 Running 3 28d

nginx-64f497f8fd-q6wk9 1/1 Running 3 28d

查看pod详细信息:

# /opt/kubernetes/bin/kubectl describe pod nginx-64f497f8fd-fjgt2

# /opt/kubernetes/bin/kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.0.0.1 <none> 443/TCP 28d

nginx NodePort 10.0.0.175 <none> 88:38696/TCP 28d

访问nodeip加端口

打开浏览器输入:http://192.168.246.164:38696

恭喜你,集群部署成功!

============================

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)