K8S集群单节点安装详细配置 以及部署nginx服务最佳实践

概述kubernetes,简称K8s,是用8代替名字中间的8个字符“ubernete”而成的缩写。是一个开源的,用于管理云平台中多个主机上的容器化的应用,Kubernetes的目标是让部署容器化的应用简单并且高效(powerful),Kubernetes提供了应用部署,规划,更新,维护的一种机制。传统的应用部署方式是通过插件或脚本来安装应用。......

概述

kubernetes,简称K8s,是用8代替名字中间的8个字符“ubernete”而成的缩写。是一个开源的,用于管理云平台中多个主机上的容器化的应用,Kubernetes的目标是让部署容器化的应用简单并且高效(powerful),Kubernetes提供了应用部署,规划,更新,维护的一种机制。

传统的应用部署方式是通过插件或脚本来安装应用。这样做的缺点是应用的运行、配置、管理、所有生存周期将与当前操作系统绑定,这样做并不利于应用的升级更新/回滚等操作,当然也可以通过创建虚拟机的方式来实现某些功能,但是虚拟机非常重,并不利于可移植性。

新的方式是通过部署容器方式实现,每个容器之间互相隔离,每个容器有自己的文件系统 ,容器之间进程不会相互影响,能区分计算资源。相对于虚拟机,容器能快速部署,由于容器与底层设施、机器文件系统解耦的,所以它能在不同云、不同版本操作系统间进行迁移。

容器占用资源少、部署快,每个应用可以被打包成一个容器镜像,每个应用与容器间成一对一关系也使容器有更大优势,使用容器可以在build或release 的阶段,为应用创建容器镜像,因为每个应用不需要与其余的应用堆栈组合,也不依赖于生产环境基础结构,这使得从研发到测试、生产能提供一致环境。类似地,容器比虚拟机轻量、更“透明”,这更便于监控和管理。

准备开始

一台或多台运行着下列系统的机器:

CentOS 7

每台机器 2 GB 或更多的 RAM 内存

2 CPU 核或更多

集群中的所有机器的网络彼此均能相互连接(公网和内网都可以)

节点之中不可以有重复的主机名,

禁用交换分区。为了保证 kubelet 正常工作,您 必须 禁用交换分区

一般来讲,硬件设备会拥有唯一的地址,但是有些虚拟机的地址可能会重复。Kubernetes 使用这些值来唯一确定集群中的节点。 如果这些值在每个节点上不唯一,可能会导致安装失败。

Hostname ip地址 系统

master 192.168.100.11 centos7

node1 192.168.100.12 centos7

node2 192.168.100.10 centos7

关闭防火墙

setenforce 0

sed -ri '/^[^#]*SELINUX=/s#=.+$#=disabled#' /etc/selinux/config

systemctl stop firewalld

systemctl disable firewalld

注:在所有节点上进行如下操作

设置主机名hostname,管理节点设置主机名为 master

需要设置其他主机名称时,可将 master 替换为正确的主机名node1

[root@localhost ~]# hostnamectl set-hostname master

[root@localhost ~]# hostnamectl set-hostname node1

[root@localhost ~]# hostnamectl set-hostname node2配置hosts主机名解析 (2主机都要改)

cat <<EOF>>/etc/hosts

192.168.100.11 master

192.168.100.12 node1

192.168.100.10 node2

EOF关闭swap swapoff -a

#/dev/mapper/centos-swap swap swap defaults 0 0 ##注释掉这一行

[root@master ~]# swapoff -a

[root@master ~]# vim /etc/fstab

[root@master ~]# cat /etc/fstab

#

# /etc/fstab

# Created by anaconda on Wed Nov 22 10:35:19 2017

#

# Accessible filesystems, by reference, are maintained under '/dev/disk'

# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info

#

/dev/mapper/centos-root / xfs defaults 0 0

UUID=30b929e5-a1eb-4f2b-bd9a-8bd32618b6cc /boot xfs defaults 0 0

#/dev/mapper/centos-swap swap swap defaults 0 0

[root@master ~]#

或着

swapoff -a

sed -i 's/.*swap.*/#&/' /etc/fstab

[root@node1 ~]# swapoff -a

[root@node1 ~]# sed -i 's/.*swap.*/#&/' /etc/fstab

[root@node1 ~]#

[root@node1 ~]# cat /etc/fstab

#

# /etc/fstab

# Created by anaconda on Wed Nov 22 10:35:19 2017

#

# Accessible filesystems, by reference, are maintained under '/dev/disk'

# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info

#

/dev/mapper/centos-root / xfs defaults 0 0

UUID=30b929e5-a1eb-4f2b-bd9a-8bd32618b6cc /boot xfs defaults 0 0

#/dev/mapper/centos-swap swap swap defaults 0 0

[root@node1 ~]#

配置内核参数,将桥接的IPv4流量传递到iptables的链

[root@master ~]# vim /etc/sysctl.d/k8s.conf

[root@master ~]# cat /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

[root@master ~]# sysctl --system ##使配置生效

* Applying /usr/lib/sysctl.d/00-system.conf ...

* Applying /usr/lib/sysctl.d/10-default-yama-scope.conf ...

kernel.yama.ptrace_scope = 0

* Applying /usr/lib/sysctl.d/50-default.conf ...

kernel.sysrq = 16

kernel.core_uses_pid = 1

net.ipv4.conf.default.rp_filter = 1

net.ipv4.conf.all.rp_filter = 1

net.ipv4.conf.default.accept_source_route = 0

net.ipv4.conf.all.accept_source_route = 0

net.ipv4.conf.default.promote_secondaries = 1

net.ipv4.conf.all.promote_secondaries = 1

fs.protected_hardlinks = 1

fs.protected_symlinks = 1

* Applying /etc/sysctl.d/99-sysctl.conf ...

* Applying /etc/sysctl.d/k8s.conf ...

net.ipv4.ip_forward = 1

* Applying /etc/sysctl.conf ...

[root@master ~]# modprobe br_netfilter ##使配置生效

//配置国内 yum源地址、epel源地址、Kubernetes源地址 dockers地址

mkdir -p /etc/yum.repo.d/repo.bak

mv /etc/yum.repo.d/*.repo /etc/yum.repo.d/repo.bak

wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.cloud.tencent.com/repo/centos7_base.repo

wget -O /etc/yum.repos.d/epel.repo http://mirrors.cloud.tencent.com/repo/epel-7.repo

配置国内kubernetes源:

[root@master ~]# vim /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

配置docker源

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

yum clean all && yum makecache安装kubeadm,docker,kubelet,kubectl(在所有节点上都安装)

yum install -y docker-ce-18.06.1.ce-3.el7 kubelet-1.15.3 kubeadm-1.15.3 kubectl-1.15.3

systemctl enable docker && systemctl start docker

systemctl enable kubelet

[root@master /]# docker -v

docker version 18.06.1-ce, build e68fc7a

[root@master /]# kubelet --version

Kubernetes v1.15.3

在master进行Kubernetes集群初始化。(注意:只能在master上执行以下命令)

kubeadm init --kubernetes-version=1.15.3 \

--apiserver-advertise-address=192.168.100.11 \

--image-repository registry.aliyuncs.com/google_containers \

--service-cidr=10.1.0.0/16 \

--pod-network-cidr=10.244.0.0/16[root@master /]# kubeadm init --kubernetes-version=1.15.3 \

> --apiserver-advertise-address=192.168.100.11 \

> --image-repository registry.aliyuncs.com/google_containers \

> --service-cidr=10.1.0.0/16 \

> --pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.15.3

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Activating the kubelet service

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [master localhost] and IPs [192.168.100.11 127.0.0.1 ::1]

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [master localhost] and IPs [192.168.100.11 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.1.0.1 192.168.100.11]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 17.502449 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.15" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node master as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: of5cct.tc5y1575yeu2alyi

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.100.11:6443 --token of5cct.tc5y1575yeu2alyi \

--discovery-token-ca-cert-hash sha256:e438b7bbadc9aeaadfe6ad139c70cd2de7f9af48d70e90c95a5f5f66dccf225f

由于kubeadm 默认从官网k8s.grc.io下载所需镜像,国内无法访问,因此需要通过–image-repository指定阿里云镜像仓库地址

集群初始化成功后返回如下信息:

kubeadm join 192.168.100.11:6443 --token of5cct.tc5y1575yeu2alyi \

--discovery-token-ca-cert-hash sha256:e438b7bbadc9aeaadfe6ad139c70cd2de7f9af48d70e90c95a5f5f66dccf225f 记录生成的最后部分内容,此内容需要在其它节点加入Kubernetes集群时执行

配置kubectl工具

[root@master /]# mkdir -p /root/.kube

[root@master /]# cp /etc/kubernetes/admin.conf /root/.kube/config

[root@master /]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master NotReady master 5m30s v1.15.3

[root@master /]# kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true"}

[root@master /]#

部署flannel网络————这个非常重要一定要配置

[root@master /]# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/a70459be0084506e4ec919aa1c114638878db11b/Documentation/kube-flannel.yml

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.extensions/kube-flannel-ds-amd64 created

daemonset.extensions/kube-flannel-ds-arm64 created

daemonset.extensions/kube-flannel-ds-arm created

daemonset.extensions/kube-flannel-ds-ppc64le created

daemonset.extensions/kube-flannel-ds-s390x created

[root@master /]#

部署node节点

在所有的node节点上添加命令,此命令在master初始化返回结果内容

kubeadm join 192.168.100.11:6443 --token of5cct.tc5y1575yeu2alyi \

--discovery-token-ca-cert-hash sha256:e438b7bbadc9aeaadfe6ad139c70cd2de7f9af48d70e90c95a5f5f66dccf225f

[root@node1 /]# kubeadm join 192.168.100.11:6443 --token of5cct.tc5y1575yeu2alyi \

> --discovery-token-ca-cert-hash sha256:e438b7bbadc9aeaadfe6ad139c70cd2de7f9af48d70e90c95a5f5f66dccf225f

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[kubelet-start] Downloading configuration for the kubelet from the "kubelet-config-1.15" ConfigMap in the kube-system namespace

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Activating the kubelet service

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

[root@node1 /]#

#在master节点输入命令检查集群状态

[root@master /]# kubectl get node

NAME STATUS ROLES AGE VERSION

master Ready master 61m v1.15.3

node1 Ready <none> 9m50s v1.15.3

node2 NotReady <none> 29s v1.15.3

[root@master /]#

————部署Dashboard——————

只在master上执行的操作:

下载创建Dashboard的yaml文件

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-beta4/aio/deploy/recommended.yaml

[root@master /]# wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-beta4/aio/deploy/recommended.yaml

--2022-07-15 15:34:42-- https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-beta4/aio/deploy/recommended.yaml

正在解析主机 raw.githubusercontent.com (raw.githubusercontent.com)... 185.199.108.133, 185.199.109.133, 185.199.111.133, ...

正在连接 raw.githubusercontent.com (raw.githubusercontent.com)|185.199.108.133|:443... 已连接。

已发出 HTTP 请求,正在等待回应... 200 OK

长度:7059 (6.9K) [text/plain]

正在保存至: “recommended.yaml”

100%[==================================>] 7,059 16.2KB/s 用时 0.4s

2022-07-15 15:34:48 (16.2 KB/s) - 已保存 “recommended.yaml” [7059/7059])

[root@master /]# ls

bin dev home lib64 mnt proc root sbin sys usr

boot etc lib media opt recommended.yaml run srv tmp var

[root@master /]#

命令或直接手动编辑kubernetes-dashboard.yaml文件

root@master /]# sed -i '/targetPort:/a\ \ \ \ \ \ nodePort: 32443\n\ \ type: NodePort' recommended.yaml

部署Dashboard

[root@master /]# kubectl apply -f recommended.yaml

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

namespace/kubernetes-dashboard configured

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

serviceaccount/kubernetes-dashboard configured

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

service/kubernetes-dashboard configured

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

secret/kubernetes-dashboard-certs configured

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

secret/kubernetes-dashboard-csrf configured

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

secret/kubernetes-dashboard-key-holder configured

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

configmap/kubernetes-dashboard-settings configured

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

role.rbac.authorization.k8s.io/kubernetes-dashboard configured

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard configured

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard configured

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard configured

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

deployment.apps/kubernetes-dashboard configured

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

deployment.apps/dashboard-metrics-scraper configured

The Service "dashboard-metrics-scraper" is invalid: spec.ports[0].nodePort: Invalid value: 32443: provided port is already allocated

[root@master /]#

某人看不懂翻译下

检查相关服务运行状态

kubectl get deployment kubernetes-dashboard -n kubernetes-dashboard

kubectl get pods -n kubernetes-dashboard -o wide

kubectl get services -n kubernetes-dashboard

netstat -ntlp|grep 32443[root@master /]# kubectl get deployment kubernetes-dashboard -n kubernetes-dashboard

NAME READY UP-TO-DATE AVAILABLE AGE

kubernetes-dashboard 1/1 1 1 9m3s

[root@master /]# kubectl get pods -n kubernetes-dashboard -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

dashboard-metrics-scraper-fb986f88d-dk2r5 1/1 Running 0 9m11s 10.244.2.2 node2 <none> <none>

kubernetes-dashboard-6bb65fcc49-dx6p7 1/1 Running 0 9m11s 10.244.1.2 node1 <none> <none>

[root@master /]# kubectl get services -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes-dashboard NodePort 10.1.167.56 <none> 443:32443/TCP 9m20s

[root@master /]# netstat -ntlp|grep 32443

tcp6 0 0 :::32443 :::* LISTEN 14589/kube-proxy

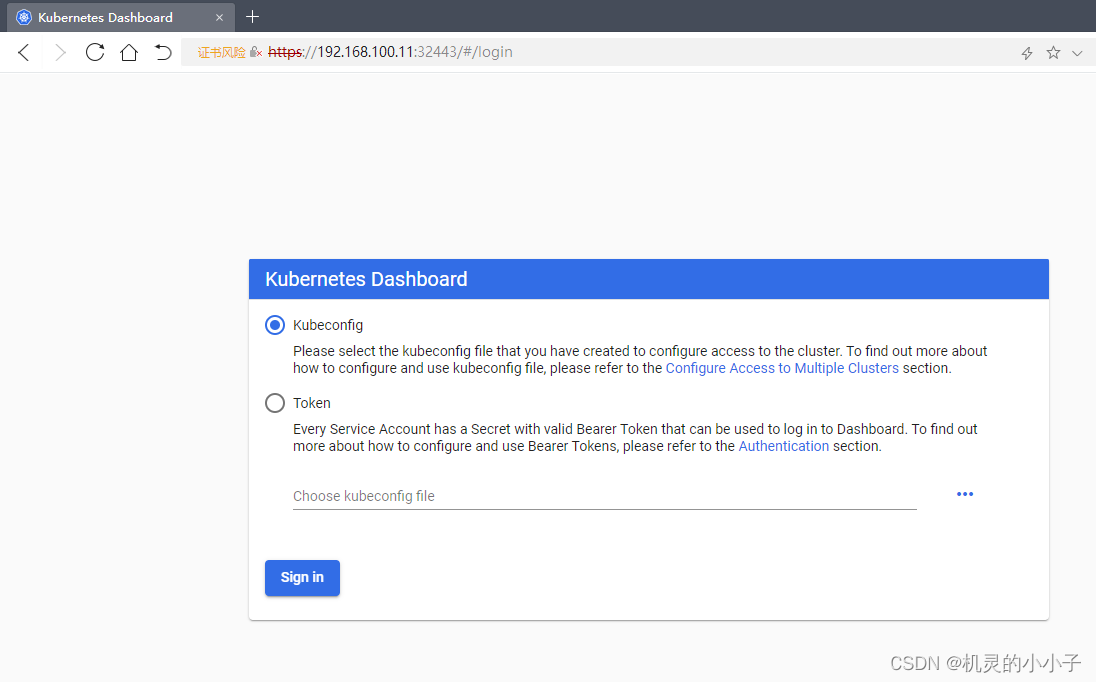

在Firefox浏览器输入Dashboard访问地址:

https://192.168.100.11:32443

查看访问Dashboard的认证令牌

[root@master ~]# kubectl create serviceaccount dashboard-admin -n kubernetes-dashboard

[root@master ~]# kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kubernetes-dashboard:dashboard-admin

[root@master ~]# kubectl describe secrets -n kubernetes-dashboard $(kubectl -n kubernetes-dashboard get secret | awk '/dashboard-admin/{print $1}')过程

[root@master /]# kubectl create serviceaccount dashboard-admin -n kubernetes-dashboard

serviceaccount/dashboard-admin created

[root@master /]# kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kubernetes-dashboard:dashboard-admin

clusterrolebinding.rbac.authorization.k8s.io/dashboard-admin created

[root@master /]# kubectl describe secrets -n kubernetes-dashboard $(kubectl -n kubernetes-dashboard get secret | awk '/dashboard-admin/{print $1}')

Name: dashboard-admin-token-6xxvs

Namespace: kubernetes-dashboard

Labels: <none>

Annotations: kubernetes.io/service-account.name: dashboard-admin

kubernetes.io/service-account.uid: cac425d1-80cb-408f-b0a0-1c0cf653a2b0

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1025 bytes

namespace: 20 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tNnh4dnMiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiY2FjNDI1ZDEtODBjYi00MDhmLWIwYTAtMWMwY2Y2NTNhMmIwIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmVybmV0ZXMtZGFzaGJvYXJkOmRhc2hib2FyZC1hZG1pbiJ9.FGGz5hITquYuGd8kLGUK1EI_ZT7siE5cFDY_82et1j-oMhui1YATFxgF_5wnVWNQj5BPiIP0IVqpzvoZ7MVj44KIoVDNE1gC6tdspoPiuMG5VmppaswVRD3seV4wPEf_eZNtmHDAlLh90FSorcs0EaLK0RTV2hAmhYPbjIXRKUCms44_h85TuVHrMfbSeoqTS3EG_G-9phFyx9RFdVuNWRxqeuRrfKL-9zOKqm39l3WepuS5ExxvgbaQXvHhAL1OoJ77YV0yCWGZ8nqNOs1K3pVSMWFqlzX1-Dz6mrIewWoOD44Oy6yVXE6yLe3I8KjHaZrN0jLASILoBhySsTcBew

[root@master /]#

打开https://192.168.100.11:32443 点击token 令牌 输入令牌码,

token: ##下面为令牌码

eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tNnh4dnMiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiY2FjNDI1ZDEtODBjYi00MDhmLWIwYTAtMWMwY2Y2NTNhMmIwIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmVybmV0ZXMtZGFzaGJvYXJkOmRhc2hib2FyZC1hZG1pbiJ9.FGGz5hITquYuGd8kLGUK1EI_ZT7siE5cFDY_82et1j-oMhui1YATFxgF_5wnVWNQj5BPiIP0IVqpzvoZ7MVj44KIoVDNE1gC6tdspoPiuMG5VmppaswVRD3seV4wPEf_eZNtmHDAlLh90FSorcs0EaLK0RTV2hAmhYPbjIXRKUCms44_h85TuVHrMfbSeoqTS3EG_G-9phFyx9RFdVuNWRxqeuRrfKL-9zOKqm39l3WepuS5ExxvgbaQXvHhAL1OoJ77YV0yCWGZ8nqNOs1K3pVSMWFqlzX1-Dz6mrIewWoOD44Oy6yVXE6yLe3I8KjHaZrN0jLASILoBhySsTcBew

登录成功,此时我们的 K8s部署成功了

解决Chrome浏览器不能打开kubernetes-dashboard

命令如下

#创建存放证书的文件夹

mkdir key

cd key

#生成证书

openssl genrsa -out dashboard.key 2048

openssl req -new -out dashboard.csr -key dashboard.key -subj '/CN=192.168.100.10'

openssl x509 -req -in dashboard.csr -signkey dashboard.key -out dashboard.crt

#删除原有的证书secret

kubectl delete secret kubernetes-dashboard-certs -n kubernetes-dashboard

#创建新的证书secret

kubectl create secret generic kubernetes-dashboard-certs --from-file=dashboard.key --from-file=dashboard.crt -n kubernetes-dashboard

#查看pod

kubectl get pod -n kubernetes-dashboard

#重启pod

kubectl delete pod $(kubectl get pod -n kubernetes-dashboard|awk '/kubernetes-dashboard/{print $1}') -n kubernetes-dashboard操作结果

[root@master ~]# mkdir key

[root@master ~]#

[root@master ~]# cd key/

[root@master key]# ls

[root@master key]# openssl genrsa -out dashboard.key 2048

Generating RSA private key, 2048 bit long modulus

........................................................................+++

.........................+++

e is 65537 (0x10001)

[root@master key]# openssl req -new -out dashboard.csr -key dashboard.key -subj '/CN=192.168.100.10'

[root@master key]#

[root@master key]# openssl x509 -req -in dashboard.csr -signkey dashboard.key -out dashboard.crt

Signature ok

subject=/CN=192.168.100.10

Getting Private key

[root@master key]# kubectl delete secret kubernetes-dashboard-certs -n kubernetes-dashboard

secret "kubernetes-dashboard-certs" deleted

[root@master key]#

[root@master key]# kubectl create secret generic kubernetes-dashboard-certs --from-file=dashboard.key --from-file=dashboard.crt -n kubernetes-dashboard

secret/kubernetes-dashboard-certs created

[root@master key]#

[root@master key]# kubectl get pod -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

dashboard-metrics-scraper-fb986f88d-dk2r5 1/1 Running 0 36m

kubernetes-dashboard-6bb65fcc49-dx6p7 1/1 Running 0 36m

[root@master key]# kubectl delete pod $(kubectl get pod -n kubernetes-dashboard|awk '/kubernetes-dashboard/{print $1}') -n kubernetes-dashboard

pod "kubernetes-dashboard-6bb65fcc49-dx6p7" deleted

接下来

部署一个服务——nginx————创建Pod以验证集群是否正常。

命令如下

kubectl get pod --all-namespaces -o wide

kubectl create deployment nginx --image=nginx

kubectl expose deployment nginx --port=80 --type=NodePort

部署过程

[root@master key]# kubectl get pod --all-namespaces -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kube-system coredns-bccdc95cf-xc8k5 1/1 Running 0 114m 10.244.0.2 master <none> <none>

kube-system coredns-bccdc95cf-xszw6 1/1 Running 0 114m 10.244.0.3 master <none> <none>

kube-system etcd-master 1/1 Running 0 113m 192.168.100.11 master <none> <none>

kube-system kube-apiserver-master 1/1 Running 0 113m 192.168.100.11 master <none> <none>

kube-system kube-controller-manager-master 1/1 Running 0 113m 192.168.100.11 master <none> <none>

kube-system kube-flannel-ds-amd64-2k4nv 1/1 Running 3 63m 192.168.100.12 node1 <none> <none>

kube-system kube-flannel-ds-amd64-rtgjg 1/1 Running 0 101m 192.168.100.11 master <none> <none>

kube-system kube-flannel-ds-amd64-wpqpq 1/1 Running 3 53m 192.168.100.10 node2 <none> <none>

kube-system kube-proxy-7x677 1/1 Running 0 114m 192.168.100.11 master <none> <none>

kube-system kube-proxy-th6gg 1/1 Running 0 53m 192.168.100.10 node2 <none> <none>

kube-system kube-proxy-vwptg 1/1 Running 0 63m 192.168.100.12 node1 <none> <none>

kube-system kube-scheduler-master 1/1 Running 0 113m 192.168.100.11 master <none> <none>

kubernetes-dashboard dashboard-metrics-scraper-fb986f88d-dk2r5 1/1 Running 0 43m 10.244.2.2 node2 <none> <none>

kubernetes-dashboard kubernetes-dashboard-6bb65fcc49-hl4hs 1/1 Running 0 6m36s 10.244.1.3 node1 <none> <none>

[root@master key]# kubectl create deployment nginx --image=nginx

deployment.apps/nginx created

[root@master key]# kubectl expose deployment nginx --port=80 --type=NodePort

service/nginx exposed

验证(需要等几分钟才能出结果)

[root@master key]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-554b9c67f9-4xldr 1/1 Running 0 10m

[root@master key]#

[root@master key]# kubectl get pod,svc

NAME READY STATUS RESTARTS AGE

pod/nginx-554b9c67f9-4xldr 1/1 Running 0 11m

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.1.0.1 <none> 443/TCP 126m

service/nginx NodePort 10.1.23.254 <none> 80:30858/TCP 10m

[root@master key]#

打开web页面查看 ,出现nginx部署,

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)